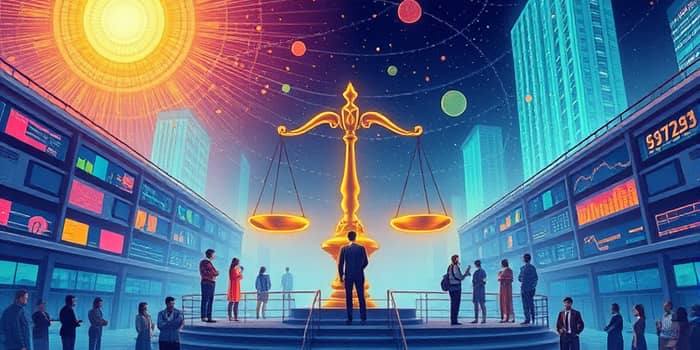

The rapid integration of artificial intelligence into financial services has unlocked unprecedented efficiency and insight. Yet this technological revolution carries a hidden cost: when left unchecked, algorithms can perpetuate systemic inequities rooted in flawed data and opaque logic. As we build the next generation of financial decision systems, we must confront not only technical challenges but also moral imperatives.

This article explores how organizations can design, implement, and oversee ethical AI frameworks that drive fair outcomes, bolster trust, and safeguard both institutions and customers.

Financial institutions worldwide are investing billions in AI to automate lending, underwriting, and risk management. AI’s promise of data-driven precision can transform credit allocation, wealth management, and insurance pricing. Yet when models learn from historical datasets that reflect longstanding biases, they can amplify rather than correct inequality.

Imagine a credit scoring system that interprets a lack of traditional credit history as high risk, when in reality it reflects unbanked or gig-economy populations. Without careful design, algorithms may unfairly penalize minority or low-income groups, undermining the very efficiency gains they were meant to deliver.

Research shows significant racial disparities in AI-driven lending decisions. Black applicants often face tougher requirements for equivalent products and services, a phenomenon driven by historical lending practices encoded into modern models.

These gaps arise because models ingest uneven data that link demographics to risk, often without context. In the absence of transparent decision records, financial institutions struggle to detect or justify these outcomes, exposing themselves to legal challenges and reputational harm.

Several factors contribute to unfair AI decisions in finance. First, reliance on historical data embeds past discrimination into current models. Second, many advanced algorithms operate as black box systems whose internal logic is difficult to interpret. Third, human design choices—from variable selection to model objectives—can inadvertently favor one demographic group over another.

For example, including zip codes as features may proxy for race or income. Without deliberate safeguards, such variables can skew risk assessments and produce self-reinforcing feedback loops that lock certain communities out of credit access.

Recognizing these risks, regulators are tightening scrutiny on algorithmic lending. Financial authorities now demand:

Institutions failing to comply face fines, litigation, and erosion of customer trust. Conversely, those that embrace fair lending principles can differentiate themselves by offering more inclusive, accountable services.

Building fair algorithms requires a holistic approach spanning data, model design, and governance. Key strategies include:

By weaving these elements into development cycles, organizations can catch unfair patterns before deployment and respond swiftly to emerging issues.

Leading institutions adopt structured frameworks to govern AI fairness. One effective approach is the five-step mitigation process:

Complementing this framework, fairness-aware model architectures can enforce parity constraints or adjust decision thresholds to achieve equitable outcomes without significantly sacrificing accuracy.

Amazon’s recruitment AI famously learned to penalize female applicants because it mirrored human resume screening biases. Google’s photo recognition system once misclassified Black individuals as animals, revealing how unchecked data can lead to dehumanizing errors. These examples illustrate that even powerful organizations can falter without diligent oversight.

In finance, similar pitfalls could translate into widespread exclusion from credit, mortgages, or insurance. Learning from these cases underscores the importance of embedding ethical considerations at every stage of AI development.

The future of fair AI in finance hinges on collaboration between technologists, regulators, and civil society. Academic research on fairness metrics continues to advance, while industry consortia are developing open standards for model documentation and bias testing.

Emerging techniques such as counterfactual testing—which evaluates how small changes in inputs affect outputs—offer promise for deeper insights into model behavior. As AI investments near $200 billion globally, now is the moment to ensure these resources build a more equitable financial system rather than entrench existing divides.

AI has the potential to democratize access to credit, personalize financial advice, and optimize risk management. But without intentional design and governance, it risks perpetuating injustice at scale. By prioritizing ethical AI practices, financial institutions can harness the power of intelligent systems while upholding principles of fairness and inclusion.

Ultimately, ensuring that algorithms serve society ethically is not just a technical challenge—it is a collective responsibility that demands vigilance, creativity, and unwavering commitment to equity.

References